I’ve written a bit in my last two blog posts about the work I’ve been doing in inter-device synchronised playback using GStreamer. I introduced the library and then demonstrated its use in building video walls.

The important thing in synchronisation, of course, is how much in-sync are the streams? The video in my previous post gave a glimpse into that, and in this post I’ll expand on that with a more rigorous, quantifiable approach.

Before I start, a quick note: I am currently providing freelance consulting around GStreamer, PulseAudio and open source multimedia in general. If you’re looking for help with any of these, do get in touch.

Quantifying what?

What is it that we are trying to measure? Let’s look at this in terms of the outcome — I have two computers, on a network. Using the gst-sync-server library, I play a stream on both of them. The ideal outcome is that the same video frame is displayed at exactly the same time, and the audio sample being played out of the respective speakers is also identical at any given instant.

As we saw previously, the video output is not a good way to measure what we want. This is because video displays are updated in sync with the display clock, over which consumer hardware generally does not have control. Besides, our eyes are not that sensitive to minor differences in timing unless images are side-by-side. After all, we’re fooling it with static pictures that change every 16.67ms or so.

Using audio, though, we should be able to do better. Digital audio streams for music/videos typically consist of 44100 or 48000 samples a second, so we have a much finer granularity than video provides us. The human ear is also fairly sensitive to timings with regards to sound. If it hears the same sound at an interval larger than 10 ms, you will hear two distinct sounds and the echo will annoy you to no end.

Measuring audio is also good enough because once you’ve got audio in sync, GStreamer will take care of A/V sync itself.

Setup

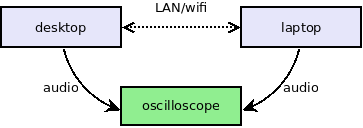

Okay, so now that we know what we want to measure, but how do we measure it? The setup is illustrated below:

As before, I’ve set up my desktop PC and laptop to play the same stream in sync. The stream being played is a local audio file — I’m keeping the setup simple by not adding network streaming to the equation.

The audio itself is just a tick sound every second. The tick is a simple 440 Hz sine wave (A₄ for the musically inclined) that runs for for 1600 samples. It sounds something like this:

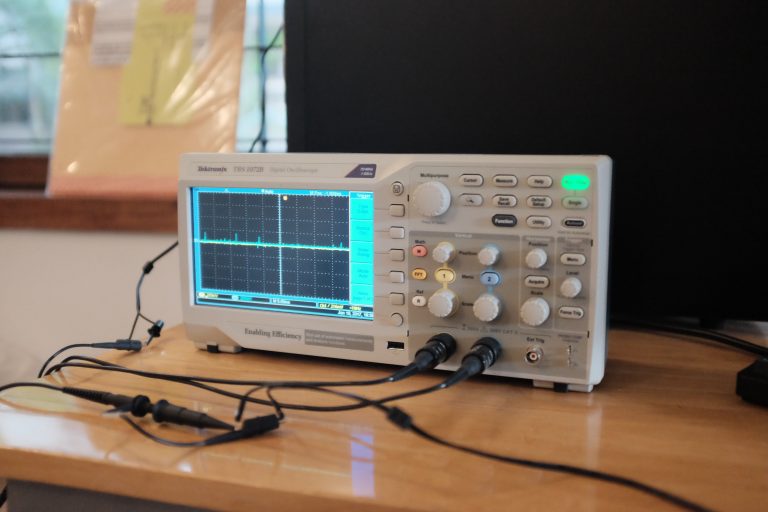

I’ve connected the 3.5mm audio output of both the computers to my faithful digital oscilloscope (a Tektronix TBS 1072B if you wanted to know). So now measuring synchronisation is really a question of seeing how far apart the leading edge of the sine wave on the tick is.

Of course, this assumes we’re not more than 1s out of sync (that’s the periodicity of the tick itself), and I’ve verified that by playing non-periodic sounds (any song or video) and making sure they’re in sync as well. You can trust me on this, or better yet, get the code and try it yourself! :)

The last piece to worry about — the network. How well we can sync the two streams depends on how well we can synchronise the clocks of the pipeline we’re running on each of the two devices. I’ll talk about how this works in a subsequent post, but my measurements are done on both a wired and wireless network.

Measurements

Before we get into it, we should keep in mind that due to how we synchronise streams — using a network clock — how in-sync our streams are will vary over time depending on the quality of the network connection.

If this variation is small enough, it won’t be noticeable. If it is large (10s of milliseconds), then we may notice start to notice it as echo, or glitches when the pipeline tries to correct for the lack of sync.

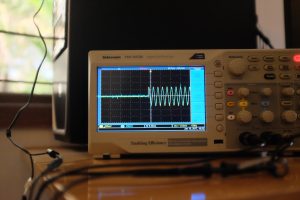

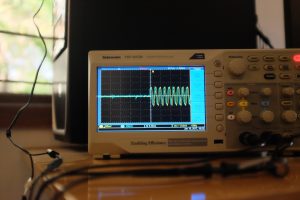

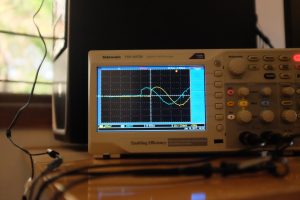

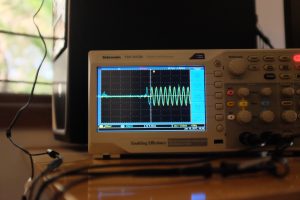

In the first setup, my laptop and desktop are connected to each other directly via a LAN cable. The result looks something like this:

- Sync on LAN, working well

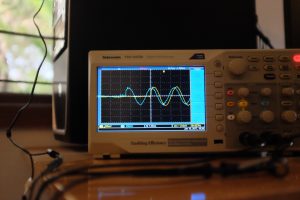

- Sync on LAN, working well, up close

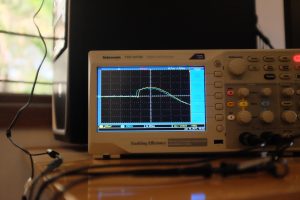

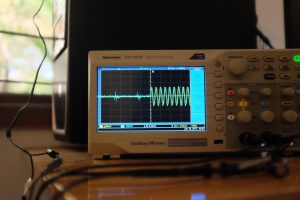

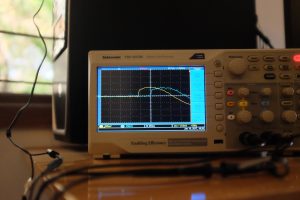

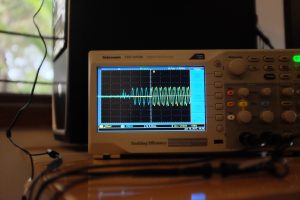

- Sync on LAN, slightly off

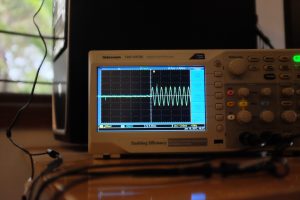

- Sync on LAN, slightly off, up close

The first two images show the best case — we need to zoom in real close to see how out of sync the audio is, and it’s roughly 50µs.

The next two images show the “worst case”. This time, the zoomed out (5ms) version shows some out-of-sync-ness, and on zooming in, we see that it’s in the order of 500µs.

So even our bad case is actually quite good — sound travels at about 340 m/s, so 500µs is the equivalent of two speakers about 17cm apart.

Now let’s make things a little more interesting. With both my laptop and desktop connected to a wifi network:

- Sync on wifi, okay on average

- Sync on wifi, okay on average, up close

- Sync on wifi, goes off on bad connection

- Sync on wifi, goes off, up close

- Sync on wifi, when it’s bad

- Sync on wifi, when it’s good

On average, the sync can be quite okay. The first pair of images show sync to be within about 300µs.

However, the wifi on my desktop is flaky, so you can see it go off up to 2.5ms in the next pair. In my setup, it even goes off up to 10-20ms, before returning to the average case. The next two images show it go back and forth.

Why does this happen? Well, let’s take a quick look at what ping statistics from my desktop to my laptop look like:

That’s not good — you can see that the minimum, average and maximum RTT are very different. Our network clock logic probably needs some tuning to deal with this much jitter.

Conclusion

These measurements show that we can get some (in my opinion) pretty good synchronisation between devices using GStreamer. I wrote the gst-sync-server library to make it easy to build applications on top of this feature.

The obvious area to improve is how we cope with jittery networks. We’ve added some infrastructure to capture and replay clock synchronisation messages offline. What remains is to build a large enough body of good and bad cases, and then tune the sync algorithm to work as well as possible with all of these.

Also, Florent over at Ubicast pointed out a nice tool they’ve written to measure A/V sync on the same device. It would be interesting to modify this to allow for automated measurement of inter-device sync.

In a future post, I’ll write more about how we actually achieve synchronisation between devices, and how we can go about improving it.

Peter Meerwald-Stadler

January 19, 2017 — 7:09 pm

in my experience, turning off power management on USB WiFi sticks helps a lot to improve jitter

Arun

January 20, 2017 — 12:13 am

Oh, interesting, thanks! I’ll try this out tomorrow to see if it helps. The desktop is quite close to the router, so it does seem like something extraneous to the wifi connectivity itself,

David Lindsay

January 20, 2017 — 8:50 am

Found this via lobste.rs.

This is something I’ve been interested in for a while, been noting down details as time’s gone by for a while now.

I once briefly chatted with someone who was designing a network-synced wireless speaker system (not the one that rhymes with Phonos – a smaller competitor). For obvious reasons they didn’t want to go too much into their (Linux-based) embedded setup, but they did drop that JACK was one of the ingredients in their special sauce. (I can try and dig out their contact info if you think you might be able to convince them to share more with you.)

I haven’t looked too much into JACK myself, but it does have a reputation for managing audio with very low latency, and it has a network transport built right into the stack, so it makes sense. This was only a couple years ago, too; my reaction was “wow, JACK, not PulseAudio!” – JACK apparently still has it in some areas. I do wonder how well it compares with GStreamer.

You’re probably already aware of mplayer’s UDP network sync code, the fact that it only works when you’re playing videos, and the fact that it’s garbage. :D

I spent way too much time one afternoon while too skittish to focus on what I was doing and came up with the following fun thought experiment that doesn’t work very well (=P):

stdbuf -o0 mplayer -cache 8192 -ao pcm:nowaveheader:file=/dev/stderr /path/to/file.ext 2> >(stdbuf -i0 -o0 tee >(stdbuf -i0 -o0 socat stdin udp-sendto:10.0.0.1:9999) | stdbuf -i0 -o0 play -r 44100 -t raw -e signed-integer -b 16 -c 2 –endian little -)

socat udp-recvfrom:9999,fork stdout | play -r 44100 -t raw -e signed-integer -b 16 -c 2 –endian little –

The mess in the first command is a splitter that uses mplayer as an “everything to PCM” converter, splits the output using tee, feeds the first half of the split into socat to UDP-datagram-bomb it over to (in this example) 10.0.0.1, and feeds the second half of the split over to

playlocally.Because I’m using

command > >(command)invocation, keyboard input to mplayer actually still works.NOTE that you might need to switch to 24-bit (-b 24) audio for some file types; mplayer will tell you (see the “AO [pcm] …” and “Samplerate: …” lines). Turn the speakers down in just case you get (literal) static. Also note the hardcoded IP – this is designed to go via direct Ethernet.

Obviously the second command runs on the receiver.

As you can see this has no timing info, and so naturally it falls apart – sadly even after just a few minutes. I’ve found that pausing the source, waiting for all the buffers to flush (which takes a second or two due to pipe buffering between mplayer, tee, and play) and then resuming it seems to resync everything nicely.

Hitting ^S then ^Q on the sender (to send XON and XOFF to the tty) is a good way to glitch the stream if you want to rapidly introduce latency for whatever reason (since mplayer and/or sox apparently use blocking writes to the terminal).

If the output is desynced, adding “delay 500s” after “endian little -” (so like “endian little – delay 500s”) adds (in this case) a 500-sample buffer between the input and sound card. To make the sound come out the remote machine first, add 500s of delay both locally and remotely, then bring the remote delay down. Unfortunately mplayer’s delay code works in 100ms steps and is too coarse. Restarting can get old quickly, but I am not aware of any “adjustable while running” approach. This is by no means perfect.

I stumbled on https://github.com/badaix/snapcast the other day; while re-locating that just now I also found https://github.com/mikebrady/shairport-sync and https://github.com/jackaudio/jackaudio.github.com/wiki/WalkThrough_User_NetJack2, and https://www.google.com.au/search?q=linux+network+audio+sync+github returned more promising-looking things than I thought it would.

Also, the Wi-Fi alliance just released TimeSync (as in, literally just – about a week ago!), which will (hopefully) be (I have no idea or details yet!) something like “this bunch of PHYs will fire the same monotonically increasing numbers at their attached host systems with literally no drift”, which of course you can use to sync audio and video.

Depending on how much of the relevant industr{y,ies} are open source(-friendly) this tech might take a while to get an open (or at least free) API. I have no idea.

Arun

January 24, 2017 — 3:26 pm

Wow, thanks for all the information. Going through it one thing at a time:

In general, I didn’t find audio latency to be a big issue — having non-huge latencies, but reliable and identical overally latencies on all machines should be a good way to guarantee sync

Relatedly, PulseAudio can go down to ~20ms latency. This is not as good as JACK, which runs single-digit latency due to how it works. We need to do better in PA, but it some of our design choices need revising to get to small single-digit.[

On the embedded appliances I’ve seen, using ALSA directly (i.e. you don’t need JACK) works well enough, since you don’t need a mixer and having direct access to the device is fastest. Especially if you don’t need to do Bluetooth etc.

If you don’t just throw all your data onto UDP, it should be possible to get better sync. Either via RTP, or if you’ve not got live media, HTTP or such should be better still. The clients can then deal with synchronising based on timestamps. For the live case, there are ideas on achieving this on RTP streams that already work in GStreamer — https://gstconf.ubicast.tv/videos/synchronised-multi-room-media-playback-and-distributed-live-media-processing-and-mixing-with-gstreamer/

I’ve looked at some of the things you pointed to, and one thing that was interesting to me that you missed was Google (Chrome)Cast and the fact that its implementation is now open in the Chromium sources — they’re a cheap way to do multiroom audio, though I’ve yet to see how well that works in practice.

I’ll take a look at TimeSync soon — something like that should certainly make our lives much easier if it works!

Jörg Krause

July 21, 2017 — 2:12 am

Can you link to the Chromium sources containing the multi room implementation, please?