In case you missed it — we got PulseAudio 9.0 out the door, with the echo cancellation improvements that I wrote about. Now is probably a good time for me to make good on my promise to expand upon the subject of beamforming.

As with the last post, I’d like to shout out to the wonderful folks at Aldebaran Robotics who made this work possible!

Beamforming

Beamforming as a concept is used in various aspects of signal processing including radio waves, but I’m going to be talking about it only as applied to audio. The basic idea is that if you have a number of microphones (a mic array) in some known arrangement, it is possible to “point” or steer the array in a particular direction, so sounds coming from that direction are made louder, while sounds from other directions are rendered softer (attenuated).

Practically speaking, it should be easy to see the value of this on a laptop, for example, where you might want to focus a mic array to point in front of the laptop, where the user probably is, and suppress sounds that might be coming from other locations. You can see an example of this in the webcam below. Notice the grilles on either side of the camera — there is a microphone behind each of these.

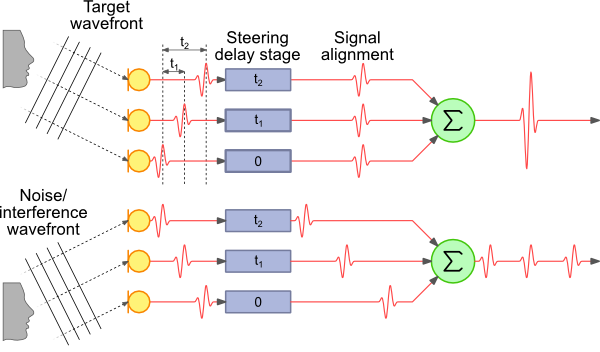

This raises the question of how this effect is achieved. The simplest approach is called “delay-sum beamforming”. The key idea in this approach is that if we have an array of microphones that we want to steer the array at a particular angle, the sound we want to steer at will reach each microphone at a different time. This is illustrated below. The image is taken from this great article describing the principles and math in a lot more detail.

In this figure, you can see that the sound from the source we want to listen to reaches the top-most microphone slightly before the next one, which in turn captures the audio slightly before the bottom-most microphone. If we know the distance between the microphones and the angle to which we want to steer the array, we can calculate the additional distance the sound has to travel to each microphone.

The speed of sound in air is roughly 340 m/s, and thus we can also calculate how much of a delay occurs between the same sound reaching each microphone. The signal at the first two microphones is delayed using this information, so that we can line up the signal from all three. Then we take the sum of the signal from all three (actually the average, but that’s not too important).

The signal from the direction we’re pointing in is going to be strongly correlated, so it will turn out loud and clear. Signals from other directions will end up being attenuated because they will only occur in one of the mics at a given point in time when we’re summing the signals — look at the noise wavefront in the illustration above as an example.

Implementation

(this section is a bit more technical than the rest of the article, feel free to skim through or skip ahead to the next section if it’s not your cup of tea!)

The devil is, of course, in the details. Given the microphone geometry and steering direction, calculating the expected delays is relatively easy. We capture audio at a fixed sample rate — let’s assume this is 32000 samples per second, or 32 kHz. That translates to one sample every 31.25 µs. So if we want to delay our signal by 125µs, we can just add a buffer of 4 samples (4 × 31.25 = 125). Sound travels about 4.25 cm in that time, so this is not an unrealistic example.

Now, instead, assume the signal needs to be delayed by 80 µs. This translates to 2.56 samples. We’re working in the digital domain — the mic has already converted the analog vibrations in the air into digital samples that have been provided to the CPU. This means that our buffer delay can either be 2 samples or 3, not 2.56. We need another way to add a fractional delay (else we’ll end up with errors in the sum).

There is a fair amount of academic work describing methods to perform filtering on a sample to provide a fractional delay. One common way is to apply an FIR filter. However, to keep things simple, the method I chose was the Thiran approximation — the literature suggests that it performs the task reasonably well, and has the advantage of not having to spend a whole lot of CPU cycles first transforming to the frequency domain (which an FIR filter requires)(edit: converting to the frequency domain isn’t necessary, thanks to the folks who pointed this out).

I’ve implemented all of this as a separate module in PulseAudio as a beamformer filter module.

Now it’s time for a confession. I’m a plumber, not a DSP ninja. My delay-sum beamformer doesn’t do a very good job. I suspect part of it is the limitation of the delay-sum approach, partly the use of an IIR filter (which the Thiran approximation is), and it’s also entirely possible there is a bug in my fractional delay implementation. Reviews and suggestions are welcome!

A Better Implementation

The astute reader has, by now, realised that we are already doing a bunch of processing on incoming audio during voice calls — I’ve written in the previous article about how the webrtc-audio-processing engine provides echo cancellation, acoustic gain control, voice activity detection, and a bunch of other features.

Another feature that the library provides is — you guessed it — beamforming. The engineers at Google (who clearly are DSP ninjas) have a pretty good beamformer implementation, and this is also available via module-echo-cancel. You do need to configure the microphone geometry yourself (which means you have to manually load the module at the moment). Details are on our wiki (thanks to Tanu for that!).

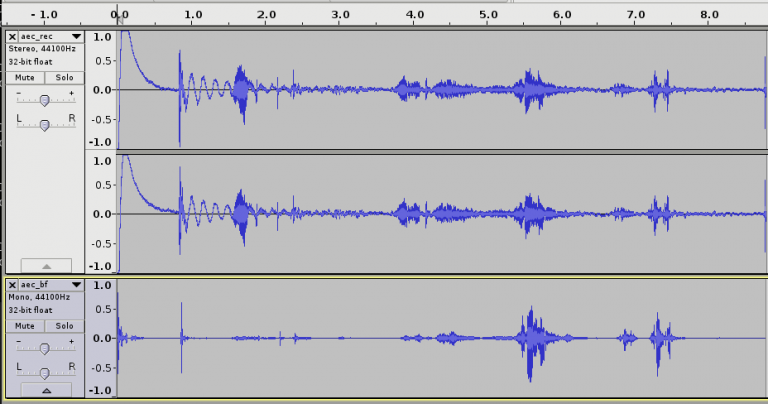

How well does this work? Let me show you. The image below is me talking to my laptop, which has two microphones about 4cm apart, on either side of the webcam, above the screen. First I move to the right of the laptop (about 60°, assuming straight ahead is 0°). Then I move to the left by about the same amount (the second speech spike). And finally I speak from the center (a couple of times, since I get distracted by my phone).

The upper section represents the microphone input — you’ll see two channels, one corresponding to each mic. The bottom part is the processed version, with echo cancellation, gain control, noise suppression, etc. and beamforming.

You can also listen to the actual recordings …

… and the processed output.

Feels like black magic, doesn’t it?

Finishing thoughts

The webrtc-audio-processing-based beamforming is already available for you to use. The downside is that you need to load the module manually, rather than have this automatically plugged in when needed (because we don’t have a way to store and retrieve the mic geometry). At some point, I would really like to implement a configuration framework within PulseAudio to allow users to set configuration from some external UI and have that be picked up as needed.

Nicolas Dufresne has done some work to wrap the webrtc-audio-processing library functionality in a GStreamer element (and this is in master now). Adding support for beamforming to the element would also be good to have.

The module-beamformer bits should be a good starting point for folks who want to wrap their own beamforming library and have it used in PulseAudio. Feel free to get in touch with me if you need help with that.